On January 10th, 2023, we saw the anticipated arrival of the next generation of Intel’s server grade CPU’s. Codenamed Sapphire Rapids, this will be the 4th Generation Intel® Xeon® Scalable Processors launched to the market. As you would expect, the new generation provides a host of new features and performance improvements which Intel hope will push performance to new levels. Talking points for this release are built-in accelerators, PCIe 5.0, DDR5 and support for Compute Express Link (CXL). Join us as we look closer at these features today alongside the official launch.

Built-in accelerators

One of the bigger features of these new CPU’s are the built-in accelerators that will enhance specific workloads. Offloading some of these computational tasks to these accelerators frees up CPU cycles for other tasks, so it is an interesting approach. There are five accelerators, namely Advanced Matrix Extensions (AMX), Dynamic Load Balancer (DLB), Data Streaming Accelerator (DSA), In-Memory Analytics Accelerator (IAA), QuickAssist Technology (QAT).

Advanced Matrix Extensions

This accelerator targets deep learning for inference and training workloads. AI acceleration could see some fantastic application on these newer generation CPU’s, allowing greater performance without having to rely as much on the GPU’s that are predominantly used in this space.

Dynamic Load Balancer

This provides efficient load balancing of data that moves between cores which would otherwise have been done with software in the past. Now, there is hardware to accelerate this “queue management” resulting in balanced performance across CPU cores.

Data Streaming Accelerator

This will optimise data streaming and data transformation, offloading the more common data movements that may otherwise cause overheads. This will free up the processor to execute other tasks rather than use those CPU cycles for copying or transforming data.

In-Memory Analytics Accelerator

A compression and decompression accelerator, this can be utilised for databases to boost throughput. It can be expected that the major database software vendors will optimise for this accelerator.

QuickAssist Technology

Intel’s QAT has been around for a while on various chipsets, but now it’s an actual accelerator on the CPU silicon itself. This will enhance data encryption and compressions tasks. Typically, these workloads can consume a lot of CPU resources so having an accelerator could increase performance and use fewer cores.

.png)

Summary of the new accelerators in 4th Generation Intel® Xeon® Scalable Processors, showing a comparison to the previous generation.

PCIe 5.0

Doubling the speed from PCIe 4.0, you can expect much more bandwidth with this new generation of CPU. With this new standard, each PCIe lane will have up to 4GB/s bandwidth meaning speeds of 64GB/s of unidirectional bandwidth in a x16 slot and 128GB/s bidirectional. This will remove a lot of bandwidth-based bottlenecks such as the latest networking interfaces which are reaching 400Gb/s and beyond. More bandwidth means less lanes are needed for a device such as a GPU, freeing up resources. For example, a GPU that required 16x PCIe lanes before may now be able to run in an x8 slot.

DDR5

The 4th generation of Intel Xeon Scalable processors is also ready for the latest and greatest memory standard, DDR5. This means faster speeds, more capacity, and less power consumption. Essentially, we are looking at memory modules of 4800MHz speeds today (previously server grade CPU’s top out at 3200MHz on DDR4), but this is expected to scale up as the technology matures with up to 8400MHz possible in the future. Higher capacity modules are also possible, with DIMMs in the future potentially reaching 2TB each! The modules will be less power hungry, meaning better performance but also lower power consumption. Also, each module now has power management circuitry on the DIMM itself, regulating voltages and improving signal integrity.

You can read our in-depth review of DDR5 and what it can mean for servers here.

Compute Express Link (CXL)

An open standard interconnect, CXL is built upon PCIe 5.0 and provides unified memory access between the CPU and connected devices. You can think of this as the devices and CPU being able to read and write data between each other’s memory, through the CXL protocol. This will lower latency and can offer more memory resources as devices can use and even extend the main system memory.

These devices may range from things such as accelerators which may not have their own on-board memory and would be able to access the main system DRAM. GPU’s and FPGA’s making system memory available to these devices and vice versa is a possibility. There will also be memory expansion devices, offering more resources overall to the system and enabling larger than standard capacity, potentially at a lower cost by utilising less costly DDR4. We will no doubt see more devices and more CXL support as the technology matures. For more information on CXL, please read our blog article here:

.png)

4th Generation Intel® Xeon® Scalable Processors overview.

Performance

We will be taking a much closer look at performance in a future article, but we have managed to get our hands on some sample CPUs in Boston Labs pre-launch and have been putting them through their paces.

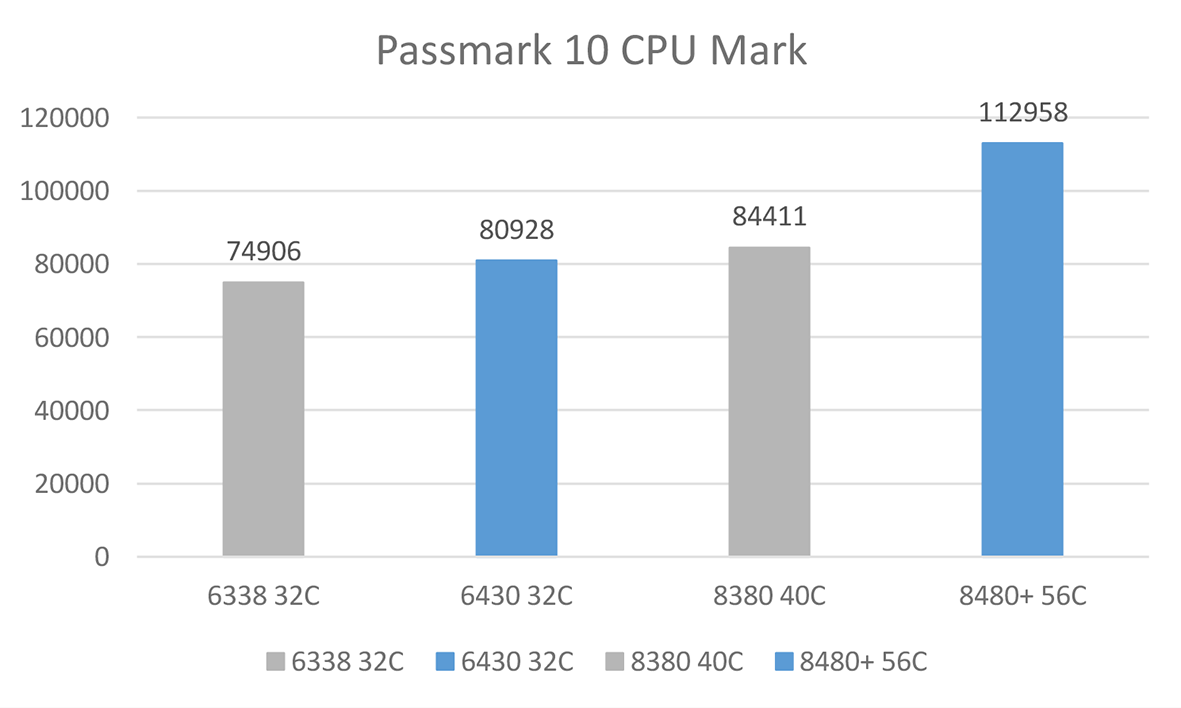

For a quick test we installed Windows 10 and ran Cinebench R23 and Passmark 10 CPU Mark.

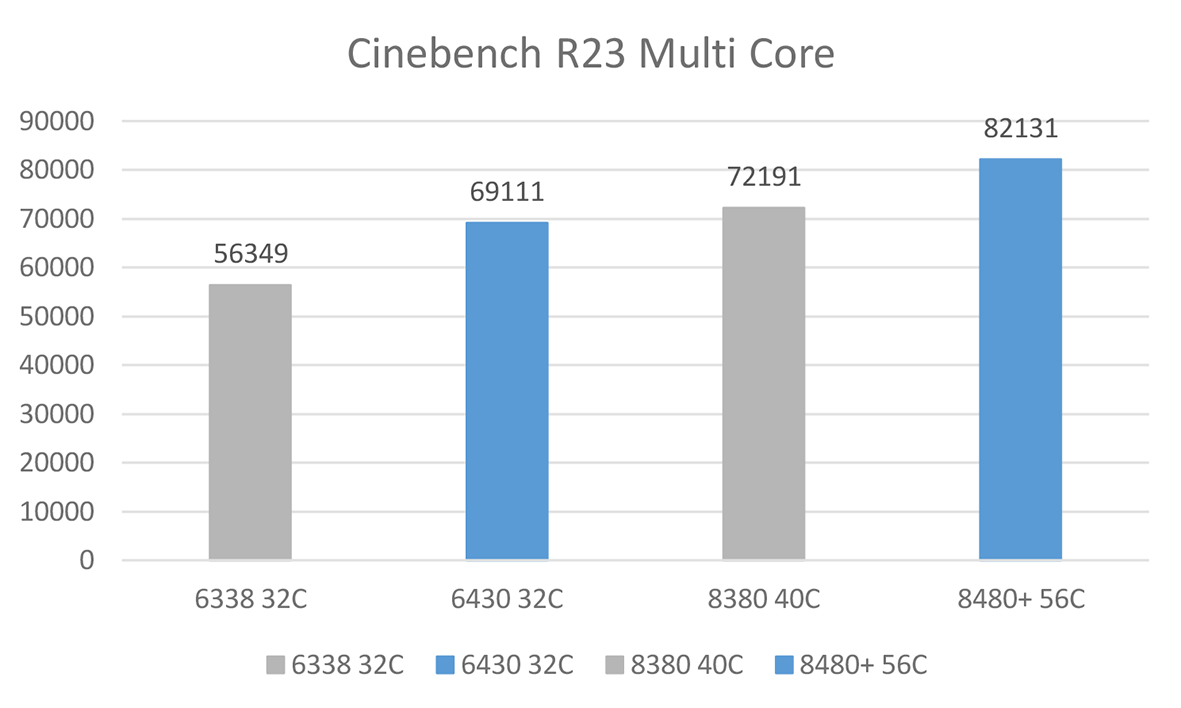

Cinebench

Cinebench R23 benchmark renders a scene using all CPU cores to give an indication of multi core performance. In Cinebench we see a good increase in performance over the previous generation, with the new 32 core SKU 6430 showing a ~23% increase in score over a 6338. For the 3rd Generation Intel® Xeon® Scalable Processors, the 40 core 8380 was the top end SKU whereas here we have a 56 core Sapphire Rapids 8480+ processor. Comparing these flagships is not a core count like for like comparison, but an increase in Cinebench performance of about 13% is however seen. I thought we would see a bigger increase, but we will certainly scrutinise the performance with other benchmarks in a future update to ensure any 8480+ optimisations are applied.

Passmark

Passmark runs a series of CPU benchmarks to test overall performance. Here we saw a smaller increase in the 32 core SKUs of only 8%, but a whopping 34% performance gain with the top end SKU’s. Here the 56 cores are showing their muscle.

Our thoughts

It’s always exciting seeing a new release of server grade CPU’s and to see what new features they bring to the table. Initial impressions are good, but we will be diving into the full suite of benchmarks in an upcoming review. The onboard accelerators could prove to be a great concept and enable customers with certain workloads to see huge benefits. Stay tuned for our follow up with more benchmarks and a deep dive into some accelerator specific tests to really show off the enhancements of this generation.

Boston Labs is all about enabling our customers to make informed decisions in selecting the right hardware, software, and overall solution for their specific challenges. If you’d like to request a test drive of 4th Generation Intel® Xeon® Scalable Processors, please get in contact by emailing [email protected] or call us on 01727 876100 and one of our experienced sales engineers will gladly guide you through building the perfect solution, just for you.

Author:

Sukhdip Mander

Field Application Engineer, Boston Limited