A short while ago, we were excited to receive the IPU-M2000 system into Boston Labs and have been eager to unbox it and start opening up test slots for our partners and key customers.

The day has finally arrived and today, we will be installing this system and taking a more in-depth look at the latest addition to Graphcore’s compute engine for IPU-based machine intelligence - the IPU M2000!

If you'd like to run a proof-of-concept remote test on this stand-alone unit or our POD 16 cluster, please get in contact with details of how an IPU could be a good fit for your workload and we’ll gladly assign you a slot. We encourage you to act quickly since the demand for this system is expected to be high. Our test drive scheme allows you to test your own codes ahead of purchase and validate Graphcore’s extremely promising benchmark data for yourself.

Packaging and content

Our Graphcore IPU-M2000 arrived in plain cardboard packaging that did not have any Graphcore branding but was packed neatly and well protected for shipping over long or short distances.

As you can see from the images, the package initially comes with a rail kit, accessory kit, power cables, and of course the Graphcore IPU M200.

In total, the packaging contained a Graphcore IPU M2000 and three boxes. The first accessory box contains:

- 1x 1.5m QSFP cable to connect the IPU-M2000 to the server (black)

- 1x 1.5m Cat5e cable to connect the IPU-M2000 to the server (blue)

- 1x 0.6m Cat5e cable to connect management ports between IPU-M2000 units (blue)

- 4x 0.3m OSFP cables to connect IPU-Link ports between IPU-M2000 units (black)

- 2x 0.15m Cat5e cables to connect Sync-Link ports between IPU-M2000 units (red)

The second contains 2x 1.45m IEC60320 AC power cables for the IPU-M2000 unit and the 3rd box holds 1x slider kit for installation in a rack.

Note: not all the supplied cables are used when building all of the possible IPU-M2000 direct attach configurations, some are only necessary when scaling up and extending the IPU fabric.

The IPU-M2000 rail kit comprises two mated inner and outer rack rails and an accessory bag containing screws. The inner rail fixes to the body of the IPU-M2000 and the outer rail fixes to the vertical rack rails in the server cabinet.

Graphcore IPU M2000 - Exterior

The IPU-M2000 front panel has a sleek, smooth appearance and contains (from left to right in the figure):

- 2 RNIC ports for connection to the server

- 8 Sync-Link ports for connection between units

- 2 management GbE ports for connection to the server

- 2 GW-Link ports – not used in direct attach systems

- 8 IPU-Link ports for connection between units

The IPU-M2000 back panel contains 2 power connectors per IPU-M2000, 5 Fan units (n+1 redundant and removable) and a Unit QR code.

The QR code is a neat feature as it is an easy to access location containing the following information for each IPU-M2000:

- Company name (Graphcore)

- Serial number

- Part numbers

- BMC Ethernet MAC address

- GW Ethernet MAC address

- Graphcore support web URL

Graphcore IPU M2000 - Interior

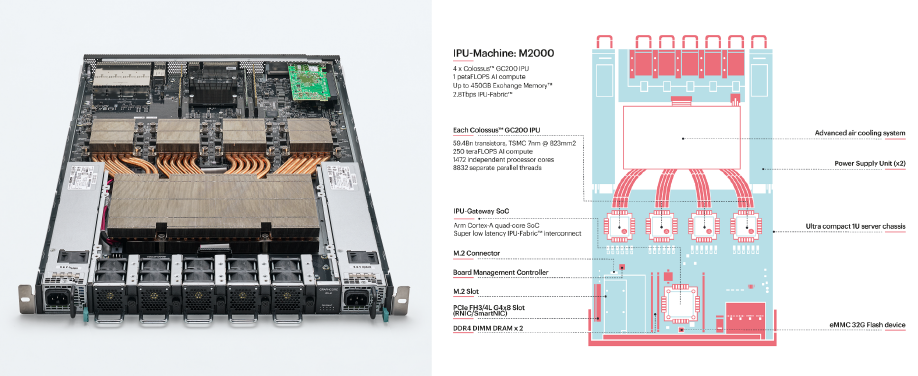

The Graphcore IPU M2000 is built with the powerful Colossus Mk2 IPU created from the bottom up for AI, the IPU-M2000 is the essential compute engine for IPU-based machine intelligence. In a small 1U blade, it delivers 1 petaFLOP of AI computing and up to 526GB Exchange-Memory™.

The IPU Machine contains four of the 7nm GC200 IPU chips. According to Graphcore, up to 64,000 IPUs may be connected together to form a massive parallel processor with up to 16 exaflops of computational capability and petabytes of memory, allowing models with trillions of parameters to be supported.

Each colossus GC200 IPU contains; 59.4Bn transistors, 250 teraFLOPS AI compute, 1472 independent processor cores, and 8832 separate parallel threads.

The IPU M2000 has an incredibly advanced high-efficiency cooling system, and the design has a large cavity for providing thermal transfer to a large heatsink/heat pipe array to cool the chips. It also contains an M.2 connector, M.2 slot, and a Board management controller, which in all adds more features to the already powerful system.

The 1U boxes are interconnected over 100Gb Ethernet with RoCE for low-latency access. Using Ethernet avoids the bottlenecks and costs of PCIe connectors and enables a flexible CPU to accelerator ratio.

It is generally the case that AI applications in data centres are performance-limited rather than cost-sensitive, whereas edge AI inference processing is naturally cost and power-sensitive. The MK2 looks to offer even better performance than the competition as a result, offering 20% more cores, 3X more on-die SRAM, and 16X greater scalability than their previous MK1. Improved scalability, deployment, and management are all possible thanks to new system software delivered with this generation.

Summary

Overall, we are impressed with the system, which we considered to be really well-built, feature-rich, and stylish.

The Graphcore IPU M2000 is one of the most powerful accelerators available in the market, supported by Graphcore’s Poplar SDK to provide a complete, scalable platform for accelerated machine intelligence development.

The box contains built-in scale-out networking, allowing users to effortlessly expand from a tiny development system to enormous rack installations, all while saving money by using standard networking instead of traditional InfiniBand.

You can start with one and expand up to thousands since the design is flexible and adaptable. It may be utilised as an independent system or as eight racks of 16 closely connected systems. The IPU-Fabric employs Ethernet tunnelling to link tiles and other IPUs, regardless of deployment size, while preserving the same programming paradigm and Bulk Synchronous Processing.

The Poplar SDK includes tools for developing and running programmes on IPU hardware using industry-standard machine-learning frameworks like TensorFlow, PyTorch, ONNX, and PaddlePaddle, as well as industry-standard converged infrastructure management tools like Open BMC, Redfish, Docker containers, and orchestration with Slurm and Kubernetes. Additionally, Graphcore are always adding support for new platforms.

GRAPHCORE IPU M2000 Key Features

The IPU-M2000 is characterised by the following high-level features:

- Compute

- 4 x Colossus™ Mk2 GC200 IPU

- 1 PetaFlop AI compute

- 5888 independent processor cores

- Memory

- Up to 450GB Exchange Memory™

- 180TB/s Exchange Memory™ bandwidth

- Communications

- 2.8Tbps ultra-low latency IPU-Fabric™

- Direct connect or via Ethernet switches

- Collectives and all-reduce operations support

- IPU Gateway SoC

- Arm Cortex quad-core A-series SoC

- Arm Cortex quad-core A-series SoC

- Form Factor

- Industry standard 1U

- Industry standard 1U

- Software

- Poplar SDK

- PopVision visualisation and analysis tools

- Converged Infrastructure Support

- Virtual-IPU comprehensive virtualisation and workload manager support

- Support for SLURM workload manager

- Support for Kubernetes orchestration

- OpenBMC management built-in

- Grafana system monitoring tool interface

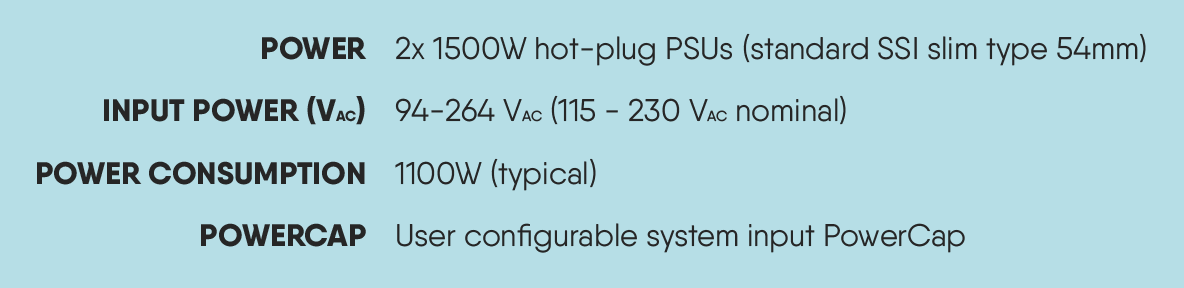

Graphcore IPU M2000 Power Consumption

Our solutions portfolio comprises of head-nodes such as the Boston Graphcore Poplar Server as a starter to your IPU solution. Building on this, we offer pre-tested and ready-to-go solutions such as with 4 x M2000 units like the Graphcore IPU-POD16 or 16 units with the IPU-POD64.

Boston Labs is committed to enabling our customers to make well-informed decisions about the hardware, software, and overall solution that will best meet their needs. If you'd like to try out the Graphcore IPU-M2000, please email [email protected] or call 01727 876100, and one of our experienced sales engineers will gladly lead you through the process of building the ideal solution for you.

Written by:

Hamza Sabir

Apprentice Technical Support Engineer